In recent years, Docker has earned an important place in the daily lives of developers. Let’s go take an overview of this tool and find out how Docker works behind the scenes.

Features of Docker

One of the most important features Docker offers is instant startup time. A Docker container can be started in a fraction of the minimum time. This is a very fast action that is incomparable with the minutes it takes to start a virtual machine.

Docker uses the features of the Linux kernel for booting and interacting with containers. Due to this dependence on the Linux kernel, when Docker is run on other systems, such as MacOs, an additional layer of virtualisation is started, which is normally “masked” by Docker for Mac (as a user, you will not notice the difference, except in terms of speed).

What is virtualisation?

Docker is a tool used to run containers: Containers are similar to virtual machines in that they simulate a machine running inside your real computer. If you’ve never used VirtualBox or VMware, for example, you might be familiar with the virtual machines used to run Windows inside a Mac.

A virtual machine simulates all parts of a real computer, including the screen and hard drive, which on the real computer (often referred to as the host) is just a large single file (called a virtual hard drive). On a virtual machine (or VM) running Windows, the virtual hard drive contains all of the Windows operating system code, which can be several gigabytes in size.

Windows does not know that it is running inside a simulation in the VM and therefore essentially inside a real computer, it just “thinks” that it is the main operating system. To be clear, like VirtualBox, Docker “virtualises” an operating system within a host operating system.

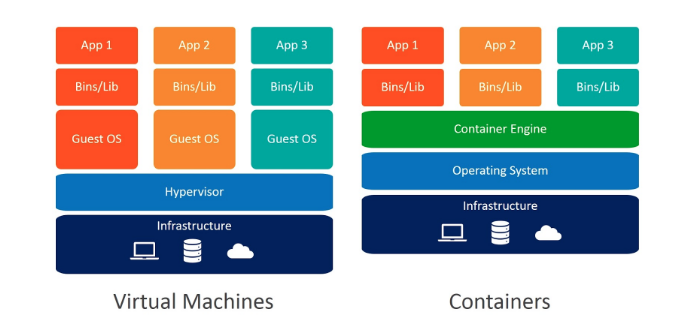

What is the difference between a VM and a container?

Using a virtual machine can be demanding on a processor. In the example above, not only does the Mac host perform all the Mac OS background tasks, but it also performs all the Windows background tasks, which makes for a heavy load for the host.

The host operating system has control over the processing power it can deliver to a program and it is precisely for this reason that virtual machines often run very slowly.

It can therefore be particularly tiring to run multiple VMs at the same time, because this would mean asking a computer to run several operating systems at the same time while maintaining gigantic virtual hard drives containing different operating systems.

Running multiple instances of the same operating system is often redundant and unnecessary, and it also risks jeopardising the execution of a virtualised operating system.

Containers, on the other hand, share redundant resources, such as some large operating system files, and hardware resources are dynamically allocated according to the needs of each container at the time.

In conclusion

Docker containers are very useful for creating isolated environments in which you can run separate programs without them interfering with each other.

In fact, they make developers’ lives much easier, as they will find themselves working in an isolated environment without interfering with the configuration of the host.

If you want to learn more about Docker, you can read “How to get started with Docker” to find out how to write a Dockerfile, create custom images and understand how to use Docker Compose to orchestrate different Docker containers.

Read related articles

Kubernetes Cloud: Cloud services for Kubernetes, practical mini guide

Kubernetes Cloud: let’s see up close Amazon Elastic Kubernetes Service (EKS), Google Kubernetes Engine (GKE) and Azure Kubernetes Service (AKS)

How Kubernetes works: operation and structure

How Kubernetes works: a mini guide to one of today’s most important tools for developers. How does Kubernetes work? As

Docker vs Kubernetes: let’s see how they differ

Docker vs Kubernetes: let’s see how they differ and why it sometimes gets a little confusing We often hear people

Italiano

Italiano

Español

Español